> Decision Making_

Beliefs:

Beliefs represent the agent's internal model of the world: information the decision system reads when evaluating options. A belief is a small C# wrapper that exposes a typed Value (the information) and an OnChanged event so consumers can react when the sensor-driven value changes. Beliefs keep state decoupled from decision logic so agents can reason about the world without polling sensors directly.

The core abstraction is Belief<T>, a minimal class that stores the typed Value and provides a way to notify listeners.

public abstract class Belief<T> : IDisposable

{

public T Value { get; protected set; }

public event Action OnChanged;

protected void NotifyChanged() => OnChanged?.Invoke();

}A concrete example, Belief_ItemsInRoom, tracks how many items the agent believes is in a room. It acts as a thin adapter over an ItemsDetector sensor: it subscribes to the detector's item-change events, updates its Value when the detector reports the current set of items (for example a List<Item>), and notifies listeners. When choosing a desire, agents either read the belief's Value or subscribe to OnChanged to react immediately. The belief provides a stable, typed interface so agents don't need to know sensor details.

public Belief_ItemsInRoom(List<Room> rooms, RoomDetector roomDetector)

{

_roomDetector = roomDetector;

// match each room with how many items agent believes it has

Value = new Dictionary<Room, int>();

foreach (var room in rooms)

{

Value[room] = room.ItemsInRoom.Count;

}

// When item in agent's current room changes, update its belief

_roomDetector.OnItemsInCurrentRoomChanged += UpdateItemsInRoom;

}

private void UpdateItemsInRoom(Room room)

{

if (Value.ContainsKey(room))

{

Value[room] = room.ItemsInRoom.Count;

// when a belief changes we can notify

// the agent to recalculate its decision

NotifyChanged();

}

}Desires:

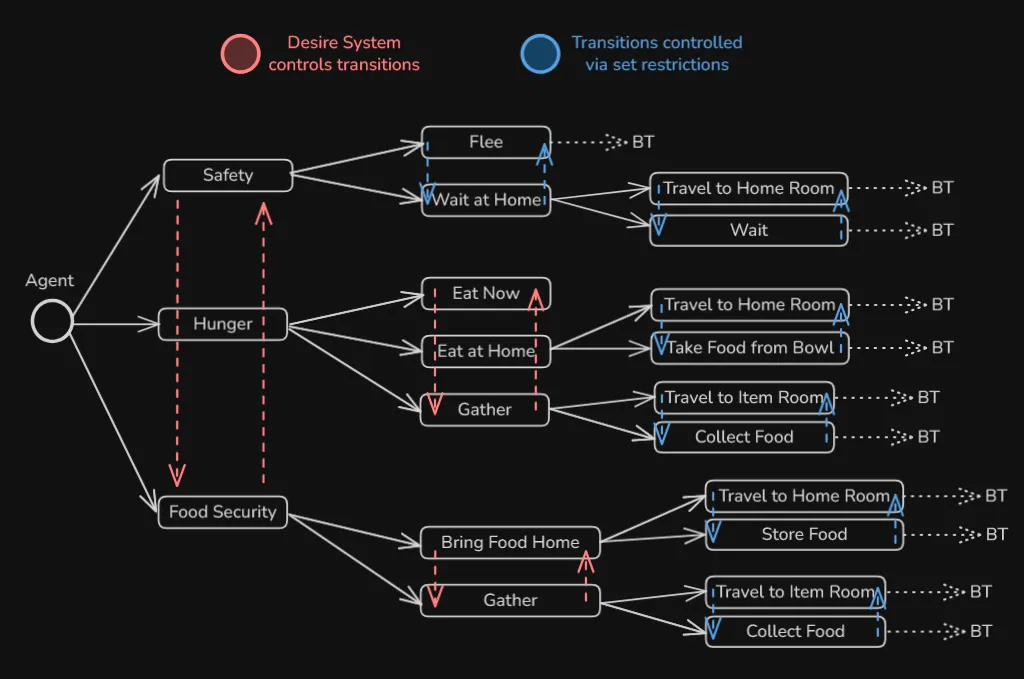

A desire system evaluates the agent's beliefs to determine what it wants to do next. It sits between beliefs (what the agent knows) and intentions (what the agent is actually doing), using domain-specific logic to score or prioritize goals. When a desire changes, it triggers the agent to switch to a new behavioural state.

The core abstraction, DesireSystem<T, X>, defines how to evaluate beliefs and notify the state machine when a new desire is chosen. Concrete implementations override CheckForDesireChange() to examine relevant beliefs and decide on the next goal using custom logic, whether that's priority-based (threat > hunger > food security) or weighted scoring (inventory amount + hunger level).

public abstract class DesireSystem<T, X> where T : BeliefHolder

{

public T BeliefHolder { get; protected set; }

public IHaveDesires<X> DesireReactor { get; }

public DesireSystem(T beliefHolder, IHaveDesires<X> desireReactor)

{

BeliefHolder = beliefHolder;

DesireReactor = desireReactor;

}

public abstract void CheckForDesireChange();

}The concrete class DesireSystem_Agent evaluates two beliefs, threat detection and hunger level, and applies a priority hierarchy to choose the agent's next desire. When threats are near, safety is the top priority; when safe, hunger drives behavior; otherwise, food security takes precedence:

public override void CheckForDesireChange()

{

var newDesire = States_Agent.FoodSecurity;

if (ShouldSafety())

newDesire = States_Agent.Safety;

else if (ShouldHunger())

newDesire = States_Agent.Hunger;

ChangeDesire(newDesire);

}

private bool ShouldSafety()

{

if (_currentDesire != States_Agent.Safety)

return _beliefThreatDetection.Value &&

_beliefThreatDetection.UnitDistanceFromMe <

_beliefThreatDetection.DetectorDangerRadius;

else

{

return _beliefThreatDetection.UnitDistanceFromMe <

_beliefThreatDetection.DetectorDangerRadius;

}

}

private bool ShouldHunger()

{

if (_currentDesire != States_Agent.Hunger)

return _beliefHungerLevel.BeliefHungerLevel.IsHungerLow();

return !_beliefHungerLevel.BeliefHungerLevel.IsHungerSatisfactory();

}Intentions:

An intention is the agent's current plan of action, the active state the state machine is executing at a given moment.

The top-level state machine, StateMachine_Agent<T>, implements IHaveDesires<T> so it knows when the desire changes. Its DesireChange() method calls RequestStateChange() to transition to the new state, bridging the gap between decision and execution.

public void DesireChange(States_Agent desiredState)

{

if (desiredState != ActiveStateName)

{

RequestStateChange(desiredState);

}

}The architecture also supports hierarchical intentions: states like StateMachine_Safety, StateMachine_Hunger, and StateMachine_FoodSecurity are themselves sub-state machines, so each broad intention can manage its own internal state transitions until a higher-level desire override occurs.

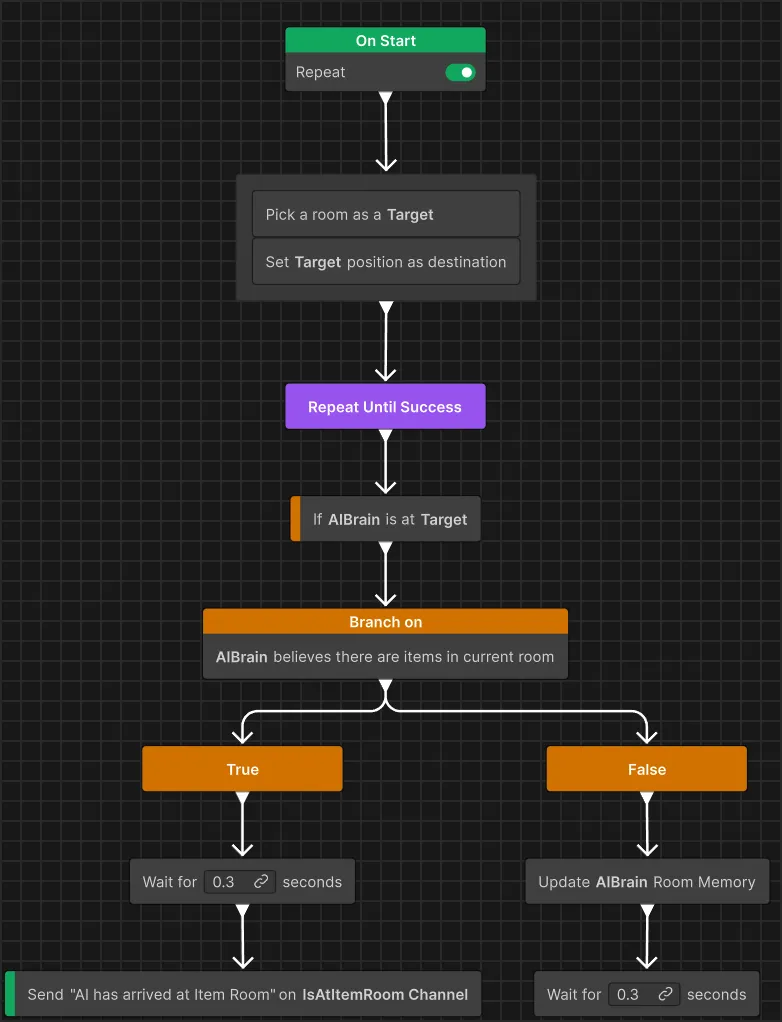

Once a state is entered, it often hands off execution to a behaviour graph using Unity's behaviour tree library. For example, a state like State_TravelToItemRoom loads a BehaviorGraph from the lookup, assigns it to the agent's BehaviorGraphAgent, sets blackboard variables, and then lets that graph drive the low-level action logic:

public override void OnEnter()

{

if (AIContext.BehaviorGraphLookup.TryGetValue(

AgentGraphs.TravelToItemRoom,

out BehaviorGraph bg

)

)

{

// behavior graphs runs when it is set

AIContext.BehaviorGraphAgent.Graph = bg;

AIContext.BehaviorGraphAgent.SetVariableValue(

"AIBrain", _aiBrain

);

}

}The Behaviour Tree for the "Travel to Item Room" state.